Why do dinosaurs have a big impact in the future? – or does a high impact factor mean good research?

Why do dinosaurs have a big impact in the future? Before I answer this question, let’s go back in time. Not 240 million years ago to T-Rex and Velociraptor, but just into the year 1975 where the first SCI Journal Citation Reports were published [1]. The purpose of this report was to help librarians optimizing their contents, so that the libraries cover the most widely read journals. However, the impact of the metrics used in these reports increased over time and today the impact factor is generally used as a metrics for good research. But is it true that a high impact factor means good research?

What, exactly, is the impact factor?

The impact factor was developed by Eugene Garfield at the Institute for Scientific Information. It was calculated by the private company Thomson Reuters’ Intellectual Property and Science Business from 1992 till 2016 and since then by Clarivate, a company that owns e.g., Endnote and Web of Science [2].

The impact factor is a scientometric index, which is calculated by the ratio between the number of citations received in a year and the total number of citable items published in that journal during the last two years’ time.

Why do we measure scientific output with the impact factor … and why shouldn’t we?

There are two main arguments for the use of impact factors. Firstly, it is a metric that is already in place. Secondly, it would be too laborious and subjective for reviewers of grant agencies to judge the quality of the researchers work without the help of the impact factor [3].

However, there are many more arguments against the use of the impact factor to measure the scientific output.

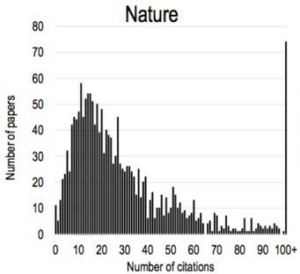

One criticism about the impact factor is the variation of citations from single articles in the same journal. Since the number of citations is not normally distributed, the median and not the mean value could be more appropriate. Lariviere and his colleagues showed the citation distribution of 11 different scientific journals in 2015. The skewed distribution is especially visible for the journals Nature and Science (see graph below) [4]. Another example to visualize the flaw in the system is the case of the article “A short history of SHELX”. This paper was used as reference, when SHELX programs were used and received more than 5,624 citations. This single article led to an increase of the impact factor from the journal Acta Crystallographica A from 2.051 in 2008 to 49.926 in 2009 [5].

This graph from Lariviere and colleagues [4] shows that the number of citations per article in the Journal Nature in 2015 is not normally distributed.

Another criticism is that papers published in high impact journals are more likely to be retracted. Cokol and his colleagues found that high-impact journals retract articles much more frequently than journals with lower impact. This could have two main reasons: Either high-impact journals publish more flawed articles, or their articles get more thoroughly checked after publishing [6,7].

A closer look towards the definition of the impact factor leads to the question of what the denominator “a citable item” is. In 2006 PLoS Medicine Editors contacted Thomson Scientific to ask how a citable item is defined. Since they did not get any clear definition provided, their conclusion was “that science is currently rated by a process that is itself unscientific, subjective and secretive” [8]. Citation of items that are considered uncitable are still counted for the impact factor and sets incentives to produce more editorial, comments and other written pieces.

The journal Folia Phoniatrica et Logopaedica showed the flaws of the impact factor by publishing an editorial, which cited 66 of their papers in the last two years. This led to an increase of the impact factor of 119% [9]. Another way to increase the impact factor is by “coercive citation”. This is a practice where an editor forces researchers to cite other recently published articles from their journal [10].

Movements to improve the metrics

In 2013 the San Francisco Declaration on Research Assessment (DORA) was released by the American Society for Cell Biology and a group of editors and publishers, which states that the impact factor should not be used to measure the quality of individual research articles. They wanted to start a discussion with this assessment to stress that the scientific content of a paper and not the impact factor is most important. Also the number of references you can add in a paper should not be limited to allow the researchers to citate the primary literature and not reviews [11].

Some publishing platforms, like F1000 and PLOS, do not show their impact factor. Instead, they offer an article-based metrics or altmetrics to measure the impact of the single articles. The company altmetrics measures the impact a published paper has in society. They have scores for how often a paper is discussed in social media or the news, how often the paper got downloaded or saved in a citation manager, how often a paper got cited and how often a paper got recommended on different platforms like F1000.

But what does that have to do with Brachiosaurus or Pterodactyl? Altmetrics shows not only the impact a paper has on society, but also which topics are interesting for the society. It is shown that papers about sports and dinosaurs have a higher altmetrics score, just because dinosaurs are cool! [12] So, the question that comes up here is, if the impact of a scientific study is measurable in a few numbers and can be predicted by editors or the whole society. Maybe we as the scientific community must agree to judge scientists on their generated knowledge and their ideas.

Taken together the idea of predicting the impact a research discovery has on the future is difficult or maybe even impossible. Neither editors nor the number of retweets allow us to look into the crystal ball, but instead we should start judging the actual content of a paper and the novelty behind the ideas. How this should be done exactly is yet an open question: we need to have an unprejudiced discussion where researchers, publishers, universities, and authorities engage in a selfless manner. But please do not stop to publish more stories about Triceratops or Stegosaurus!

- Garfield, E. COURTESY OF CLARIVATE ANALYTICS. 1.

- Cross, J. ISI/Thomson Scientific – It’s Not Just about Impact Factors. Ed. Bull. 2005, 1 (1), 4–7. https://doi.org/10.1080/17521740701694957.

- McKiernan, E. C.; Schimanski, L. A.; Muñoz Nieves, C.; Matthias, L.; Niles, M. T.; Alperin, J. P. Use of the Journal Impact Factor in Academic Review, Promotion, and Tenure Evaluations. eLife 2019, 8, e47338. https://doi.org/10.7554/eLife.47338.

- Larivière, V.; Kiermer, V.; MacCallum, C. J.; McNutt, M.; Patterson, M.; Pulverer, B.; Swaminathan, S.; Taylor, S.; Curry, S. A Simple Proposal for the Publication of Journal Citation Distributions; preprint; 2016. https://doi.org/10.1101/062109.

- Johnston, J.; Road, M. G.; Dimitrov, J. D.; Kaveri, S. V.; Bayry, J.; Fitzsimmons, J. M.; Field-Naturalists’, O.; Po, W.; Cintas, P.; de, D. Metrics: Journal’s Impact Factor Skewed by a Single Paper. 1.

- Garvalov, B. K. Mobility Is Not the Only Way Forward. EMBO Rep. 2007, 8 (5), 422–422. https://doi.org/10.1038/sj.embor.7400969.

- Fang, F. C.; Casadevall, A. Retracted Science and the Retraction Index. Infect. Immun. 2011, 79 (10), 3855–3859. https://doi.org/10.1128/IAI.05661-11.

- The PLoS Medicine Editors. The Impact Factor Game. PLoS Med. 2006, 3 (6), e291. https://doi.org/10.1371/journal.pmed.0030291.

- Foo, J. Y. A. Impact of Excessive Journal Self-Citations: A Case Study on the Folia Phoniatrica et Logopaedica Journal. 9.

- Wilhite, A. W.; Fong, E. A. Coercive Citation in Academic Publishing. Science 2012, 335 (6068), 542–543. https://doi.org/10.1126/science.1212540.

- Ylä-Herttuala, S. From the Impact Factor to DORA and the Scientific Content of Articles. Mol. Ther. 2015, 23 (4), 609. https://doi.org/10.1038/mt.2015.32.

- Eysenbach, G. Can Tweets Predict Citations? Metrics of Social Impact Based on Twitter and Correlation with Traditional Metrics of Scientific Impact. J. Med. Internet Res. 2011, 13 (4), e123. https://doi.org/10.2196/jmir.2012.

https://commons.wikimedia.org/wiki/File:Bios_robotlab_writing_robot.jpg

https://commons.wikimedia.org/wiki/File:Bios_robotlab_writing_robot.jpg

What is the relationship between the impact factor and the quality of research, and why are dinosaurs significant for the future?